Microsoft DP-500 Designing and Implementing Enterprise-Scale Analytics Solutions Using Microsoft Azure and Microsoft Power BI Online Training

Microsoft DP-500 Online Training

The questions for DP-500 were last updated at Jun 01,2026.

- Exam Code: DP-500

- Exam Name: Designing and Implementing Enterprise-Scale Analytics Solutions Using Microsoft Azure and Microsoft Power BI

- Certification Provider: Microsoft

- Latest update: Jun 01,2026

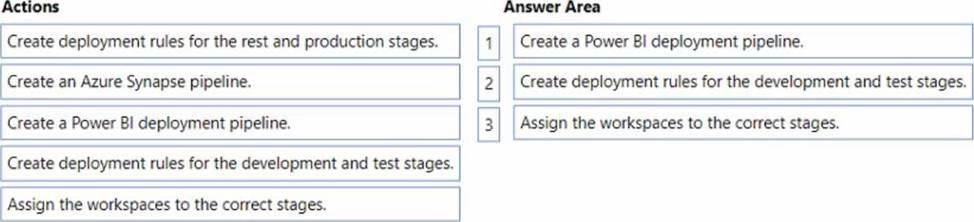

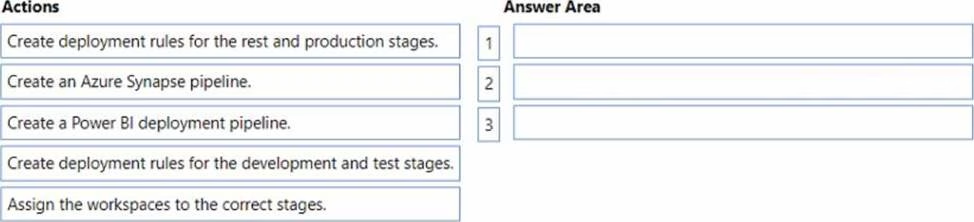

DRAG DROP

You need to ensure that the new process for deploying reports and datasets to the User Experience workspace meets the technical requirements.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

The enterprise analytics team needs to resolve the DAX measure performance issues.

What should the team do first?

- A . Use Performance analyzer in Power Bl Desktop to get the DAX durations.

- B . Use DAX Studio to get detailed statistics on the server timings.

- C . Use DAX Studio to review the Vertipaq Analyzer metrics.

- D . Use Tabular Editor to create calculation groups.

You need to optimize the workflow for the creation of reports and the adjustment of tables by the enterprise analytics team.

What should you do?

- A . Add a tenant-level storage connection to Power Bl

- B . Create a linked service in workspace1.

- C . Create an integration runtime in workspace1.

- D . From the Tenant setting, enable Use global search for Power BL

You need to meet the technical requirements for deploying reports and datasets to the User Experience workspace.

What should you do?

- A . From the Corporate Data Models and User Experience workspaces, select Allow contributors to update the app.

- B . From the Corporate Data Models and User Experience workspace, set License mode to Premium per user

- C . From the Tenant settings, set Allow specific users to turn on external data sharing to Enable.

- D . From the Developer settings, set Allow service principals to use Power Bl APIs to Enable.

You need to recommend changes to the Power Bl tenant to meet the technical requirements for external data sharing.

Which tenant setting should you recommend disabling?

- A . Allow shareable links to grant access to everyone in your organization

- B . Allow Azure Active Directory guest users to edit and manage content in the organization

- C . Users can reassign personal workspaces

- D . Show Azure Active Directory guests in lists of suggested people

The group registers the Power Bl tenant as a data source1.

You need to ensure that all the analysts can view the assets in the Power Bl tenant The solution must meet the technical requirements for Microsoft Purview and Power BI.

What should you do?

- A . Create a scan.

- B . Deploy a Power Bl gateway.

- C . Search the data catalog.

- D . Create a linked service.

Topic 4, Misc. Questions

You develop a solution that uses a Power Bl Premium capacity. The capacity contains a dataset that is expected to consume 50 GB of memory.

Which two actions should you perform to ensure that you can publish the model successfully to the Power Bl service? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

- A . Increase the Max Offline Dataset Size setting.

- B . Invoke a refresh to load historical data based on the incremental refresh policy.

- C . Restart the capacity.

- D . Publish an initial dataset that is less than 10 GB.

- E . Publish the complete dataset.

DRAG DROP

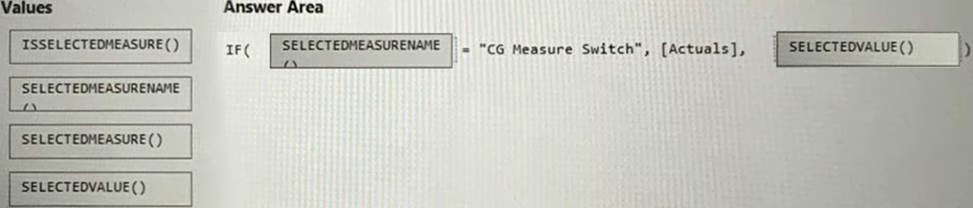

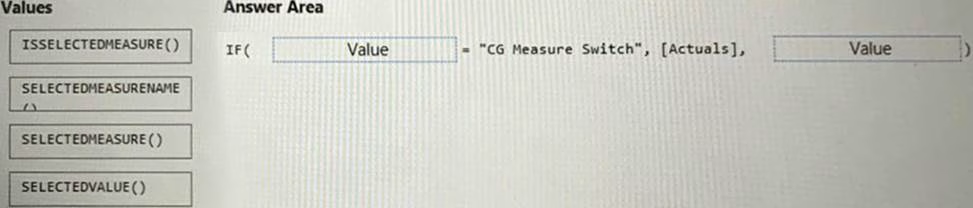

You have a Power Bl dataset that contains the following measures:

• Budget

• Actuals

• Forecast

You create a report that contains 10 visuals.

You need provide users with the ability to use a slicer to switch between the measures in two visuals only.

You create a dedicated measure named cg Measure switch.

How should you complete the DAX expression for the Actuals measure? To answer, drag the appropriate values to the targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content. NOTE: Each correct selection is worth one point.

You have a Power Bi workspace named Workspacel in a Premium capacity. Workspacel contains a dataset.

During a scheduled refresh, you receive the following error message: "Unable to save the changes since the new dataset size of 11,354 MB exceeds the limit of 10,240 MB."

You need to ensure that you can refresh the dataset.

What should you do?

- A . Turn on Large dataset storage format.

- B . Connect Workspace1 to an Azure Data Lake Storage Gen2 account

- C . Change License mode to Premium per user.

- D . Change the location of the Premium capacity.

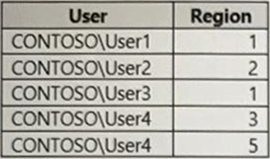

You have a dataset that contains a table named UserPermissions. UserPermissions contains the following data.

You plan to create a security role named User Security for the dataset. You need to filter the dataset based on the current users.

What should you include in the DAX expression?

- A . [UserPermissions] – USERNAME()

- B . [UserPermissions] – USERPRINCIPALNAME()

- C . [User] = USERPRINCIPALNAME()

- D . [User] = USERNAME()

- E . [User] = USEROBJECTID()

Latest DP-500 Dumps Valid Version with 83 Q&As

Latest And Valid Q&A | Instant Download | Once Fail, Full Refund