Amazon MLS-C01 AWS Certified Machine Learning – Specialty Online Training

Amazon MLS-C01 Online Training

The questions for MLS-C01 were last updated at Mar 01,2026.

- Exam Code: MLS-C01

- Exam Name: AWS Certified Machine Learning - Specialty

- Certification Provider: Amazon

- Latest update: Mar 01,2026

A Machine Learning Specialist is building a convolutional neural network (CNN) that will classify 10 types of animals. The Specialist has built a series of layers in a neural network that will take an input image of an animal, pass it through a series of convolutional and pooling layers, and then finally pass it through a dense and fully connected layer with 10 nodes. The Specialist would like to get an output from the neural network that is a probability distribution of how likely it is that the input image belongs to each of the 10 classes

Which function will produce the desired output?

- A . Dropout

- B . Smooth L1 loss

- C . Softmax

- D . Rectified linear units (ReLU)

A Machine Learning Specialist is building a model that will perform time series forecasting using Amazon SageMaker. The Specialist has finished training the model and is now planning to perform load testing on the endpoint so they can configure Auto Scaling for the model variant

Which approach will allow the Specialist to review the latency, memory utilization, and CPU utilization during the load test"?

- A . Review SageMaker logs that have been written to Amazon S3 by leveraging Amazon Athena and Amazon OuickSight to visualize logs as they are being produced

- B . Generate an Amazon CloudWatch dashboard to create a single view for the latency, memory utilization, and CPU utilization metrics that are outputted by Amazon SageMaker

- C . Build custom Amazon CloudWatch Logs and then leverage Amazon ES and Kibana to query and visualize the data as it is generated by Amazon SageMaker

- D . Send Amazon CloudWatch Logs that were generated by Amazon SageMaker lo Amazon ES and use Kibana to query and visualize the log data.

An Amazon SageMaker notebook instance is launched into Amazon VPC. The SageMaker notebook references data contained in an Amazon S3 bucket in another account. The bucket is encrypted using SSE-KMS. The instance returns an access denied error when trying to access data in Amazon S3.

Which of the following are required to access the bucket and avoid the access denied error? (Select THREE)

- A . An AWS KMS key policy that allows access to the customer master key (CMK)

- B . A SageMaker notebook security group that allows access to Amazon S3

- C . An 1AM role that allows access to the specific S3 bucket

- D . A permissive S3 bucket policy

- E . An S3 bucket owner that matches the notebook owner

- F . A SegaMaker notebook subnet ACL that allow traffic to Amazon S3.

A monitoring service generates 1 TB of scale metrics record data every minute A Research team performs queries on this data using Amazon Athena. The queries run slowly due to the large volume of data, and the team requires better performance

How should the records be stored in Amazon S3 to improve query performance?

- A . CSV files

- B . Parquet files

- C . Compressed JSON

- D . RecordIO

A Machine Learning Specialist needs to create a data repository to hold a large amount of time-based training data for a new model. In the source system, new files are added every hour Throughout a single 24-hour period, the volume of hourly updates will change significantly. The Specialist always wants to train on the last 24 hours of the data

Which type of data repository is the MOST cost-effective solution?

- A . An Amazon EBS-backed Amazon EC2 instance with hourly directories

- B . An Amazon RDS database with hourly table partitions

- C . An Amazon S3 data lake with hourly object prefixes

- D . An Amazon EMR cluster with hourly hive partitions on Amazon EBS volumes

A retail chain has been ingesting purchasing records from its network of 20,000 stores to Amazon S3 using Amazon Kinesis Data Firehose To support training an improved machine learning model, training records will require new but simple transformations, and some attributes will be combined. The model needs lo be retrained daily

Given the large number of stores and the legacy data ingestion, which change will require the LEAST amount of development effort?

- A . Require that the stores to switch to capturing their data locally on AWS Storage Gateway for loading into Amazon S3 then use AWS Glue to do the transformation

- B . Deploy an Amazon EMR cluster running Apache Spark with the transformation logic, and have the cluster run each day on the accumulating records in Amazon S3, outputting new/transformed records to Amazon S3

- C . Spin up a fleet of Amazon EC2 instances with the transformation logic, have them transform the data records accumulating on Amazon S3, and output the transformed records to Amazon S3.

- D . Insert an Amazon Kinesis Data Analytics stream downstream of the Kinesis Data Firehouse stream that transforms raw record attributes into simple transformed values using SQL.

A city wants to monitor its air quality to address the consequences of air pollution A Machine Learning Specialist needs to forecast the air quality in parts per million of contaminates for the next 2 days in the city as this is a prototype, only daily data from the last year is available.

Which model is MOST likely to provide the best results in Amazon SageMaker?

- A . Use the Amazon SageMaker k-Nearest-Neighbors (kNN) algorithm on the single time series consisting of the full year of data with a predictor_type of regressor.

- B . Use Amazon SageMaker Random Cut Forest (RCF) on the single time series consisting of the full year of data.

- C . Use the Amazon SageMaker Linear Learner algorithm on the single time series consisting of the full year of data with a predictor_type of regressor.

- D . Use the Amazon SageMaker Linear Learner algorithm on the single time series consisting of the full year of data with a predictor_type of classifier.

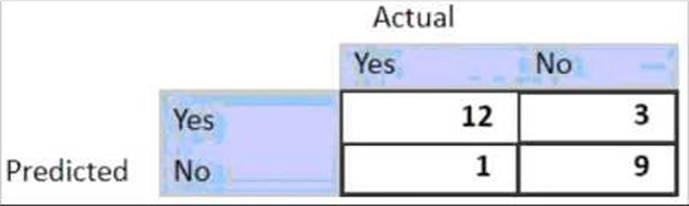

For the given confusion matrix, what is the recall and precision of the model?

- A . Recall = 0.92 Precision = 0.84

- B . Recall = 0.84 Precision = 0.8

- C . Recall = 0.92 Precision = 0.8

- D . Recall = 0.8 Precision = 0.92

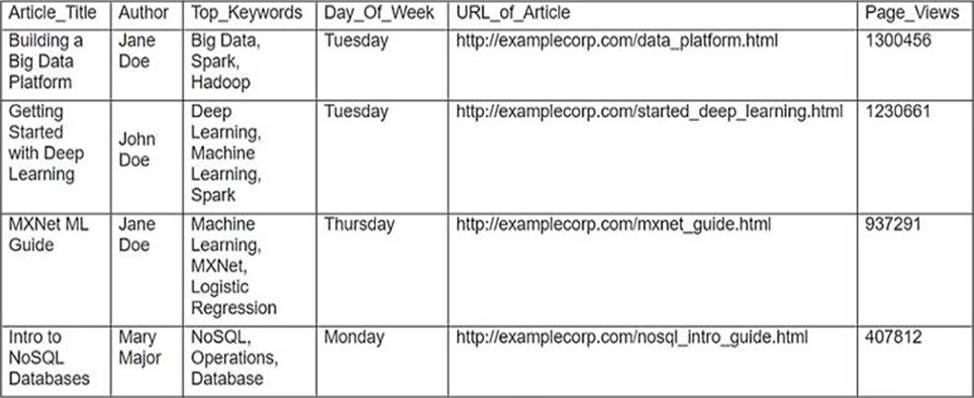

A Machine Learning Specialist is working with a media company to perform classification on popular articles from the company’s website. The company is using random forests to classify how popular an article will be before it is published A sample of the data being used is below.

Given the dataset, the Specialist wants to convert the Day-Of_Week column to binary values.

What technique should be used to convert this column to binary values.

- A . Binarization

- B . One-hot encoding

- C . Tokenization

- D . Normalization transformation

A company has raw user and transaction data stored in AmazonS3 a MySQL database, and Amazon RedShift A Data Scientist needs to perform an analysis by joining the three datasets from Amazon S3, MySQL, and Amazon RedShift, and then calculating the average-of a few selected columns from the joined data

Which AWS service should the Data Scientist use?

- A . Amazon Athena

- B . Amazon Redshift Spectrum

- C . AWS Glue

- D . Amazon QuickSight

Latest MLS-C01 Dumps Valid Version with 104 Q&As

Latest And Valid Q&A | Instant Download | Once Fail, Full Refund