Amazon MLS-C01 AWS Certified Machine Learning – Specialty Online Training

Amazon MLS-C01 Online Training

The questions for MLS-C01 were last updated at Feb 28,2026.

- Exam Code: MLS-C01

- Exam Name: AWS Certified Machine Learning - Specialty

- Certification Provider: Amazon

- Latest update: Feb 28,2026

A web-based company wants to improve its conversion rate on its landing page Using a large historical dataset of customer visits, the company has repeatedly trained a multi-class deep learning network algorithm on Amazon SageMaker However there is an overfitting problem training data shows 90% accuracy in predictions, while test data shows 70% accuracy only

The company needs to boost the generalization of its model before deploying it into production to maximize conversions of visits to purchases

Which action is recommended to provide the HIGHEST accuracy model for the company’s test and validation data?

- A . Increase the randomization of training data in the mini-batches used in training.

- B . Allocate a higher proportion of the overall data to the training dataset

- C . Apply L1 or L2 regularization and dropouts to the training.

- D . Reduce the number of layers and units (or neurons) from the deep learning network.

A Machine Learning Specialist was given a dataset consisting of unlabeled data. The Specialist must create a model that can help the team classify the data into different buckets.

What model should be used to complete this work?

- A . K-means clustering

- B . Random Cut Forest (RCF)

- C . XGBoost

- D . BlazingText

A retail company intends to use machine learning to categorize new products A labeled dataset of current products was provided to the Data Science team. The dataset includes 1 200 products. The labeled dataset has 15 features for each product such as title dimensions, weight, and price Each product is labeled as belonging to one of six categories such as books, games, electronics, and movies.

Which model should be used for categorizing new products using the provided dataset for training?

- A . An XGBoost model where the objective parameter is set to multi: softmax

- B . A deep convolutional neural network (CNN) with a softmax activation function for the last layer

- C . A regression forest where the number of trees is set equal to the number of product categories

- D . A DeepAR forecasting model based on a recurrent neural network (RNN)

A Machine Learning Specialist is building a model to predict future employment rates based on a wide range of economic factors While exploring the data, the Specialist notices that the magnitude of the input features vary greatly. The Specialist does not want variables with a larger magnitude to dominate the model

What should the Specialist do to prepare the data for model training’?

- A . Apply quantile binning to group the data into categorical bins to keep any relationships in the data by replacing the magnitude with distribution

- B . Apply the Cartesian product transformation to create new combinations of fields that are independent of the magnitude

- C . Apply normalization to ensure each field will have a mean of 0 and a variance of 1 to remove any significant magnitude

- D . Apply the orthogonal sparse Diagram (OSB) transformation to apply a fixed-size sliding window to generate new features of a similar magnitude.

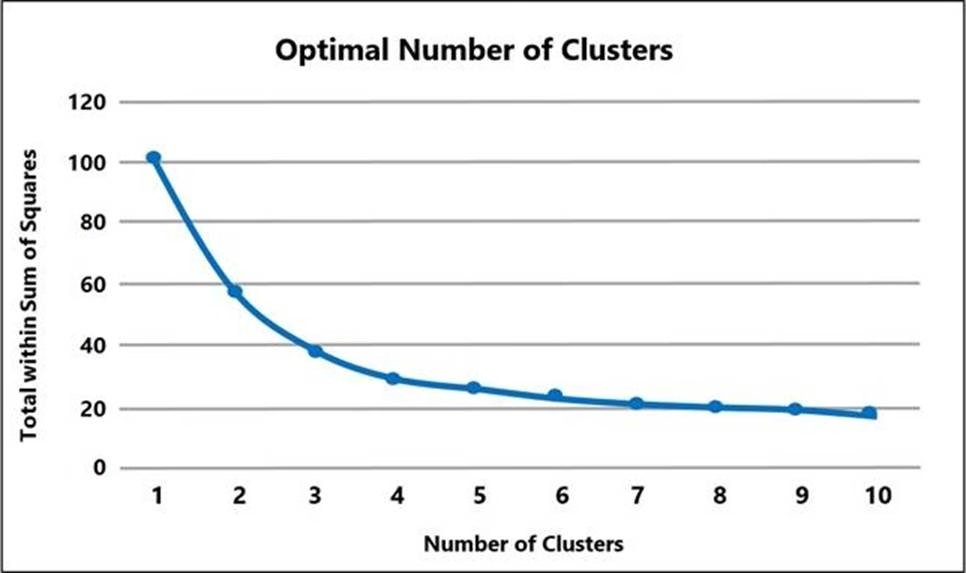

A Machine Learning Specialist prepared the following graph displaying the results of k-means for k = [1:10]

Considering the graph, what is a reasonable selection for the optimal choice of k?

- A . 1

- B . 4

- C . 7

- D . 10

A company is using Amazon Polly to translate plaintext documents to speech for automated company announcements However company acronyms are being mispronounced in the current documents.

How should a Machine Learning Specialist address this issue for future documents?

- A . Convert current documents to SSML with pronunciation tags

- B . Create an appropriate pronunciation lexicon.

- C . Output speech marks to guide in pronunciation

- D . Use Amazon Lex to preprocess the text files for pronunciation

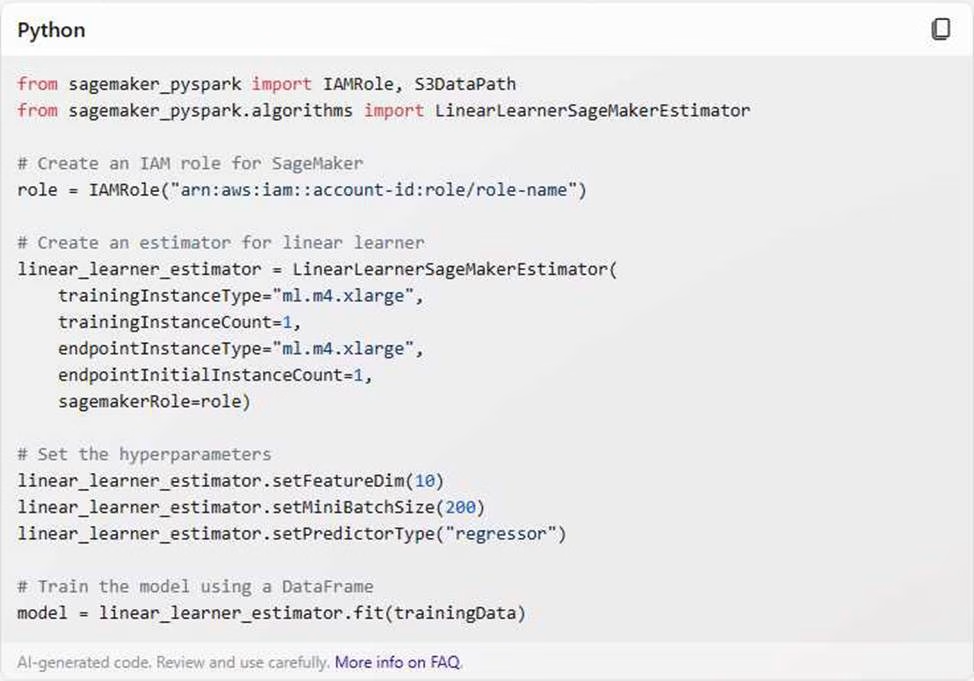

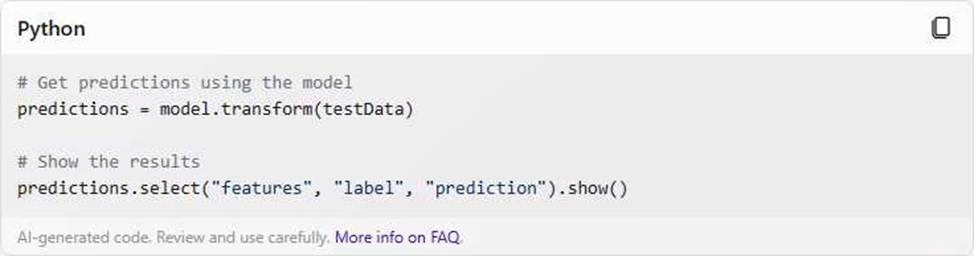

A Machine Learning Specialist is using Apache Spark for pre-processing training data As part of the Spark pipeline, the Specialist wants to use Amazon SageMaker for training a model and hosting it.

Which of the following would the Specialist do to integrate the Spark application with SageMaker? (Select THREE)

- A . Download the AWS SDK for the Spark environment

- B . Install the SageMaker Spark library in the Spark environment.

- C . Use the appropriate estimator from the SageMaker Spark Library to train a model.

- D . Compress the training data into a ZIP file and upload it to a pre-defined Amazon S3 bucket.

- E . Use the sageMakerModel. transform method to get inferences from the model hosted in SageMaker

- F . Convert the DataFrame object to a CSV file, and use the CSV file as input for obtaining inferences from SageMaker.

A Machine Learning Specialist is working with a large cybersecurily company that manages security events in real time for companies around the world. The cybersecurity company wants to design a solution that will allow it to use machine learning to score malicious events as anomalies on the data as it is being ingested. The company also wants be able to save the results in its data lake for later processing and analysis

What is the MOST efficient way to accomplish these tasks?

- A . Ingest the data using Amazon Kinesis Data Firehose, and use Amazon Kinesis Data Analytics Random Cut Forest (RCF) for anomaly detection Then use Kinesis Data Firehose to stream the results to Amazon S3

- B . Ingest the data into Apache Spark Streaming using Amazon EMR. and use Spark MLlib with k-means to perform anomaly detection Then store the results in an Apache Hadoop Distributed File System (HDFS) using Amazon EMR with a replication factor of three as the data lake

- C . Ingest the data and store it in Amazon S3 Use AWS Batch along with the AWS Deep Learning AMIs to train a k-means model using TensorFlow on the data in Amazon S3.

- D . Ingest the data and store it in Amazon S3. Have an AWS Glue job that is triggered on demand transform the new data Then use the built-in Random Cut Forest (RCF) model within Amazon SageMaker to detect anomalies in the data

A Machine Learning Specialist works for a credit card processing company and needs to predict which transactions may be fraudulent in near-real time. Specifically, the Specialist must train a model that returns the probability that a given transaction may be fraudulent.

How should the Specialist frame this business problem’?

- A . Streaming classification

- B . Binary classification

- C . Multi-category classification

- D . Regression classification

Amazon Connect has recently been tolled out across a company as a contact call center. The solution has been configured to store voice call recordings on Amazon S3

The content of the voice calls are being analyzed for the incidents being discussed by the call operators Amazon Transcribe is being used to convert the audio to text, and the output is stored on Amazon S3

Which approach will provide the information required for further analysis?

- A . Use Amazon Comprehend with the transcribed files to build the key topics

- B . Use Amazon Translate with the transcribed files to train and build a model for the key topics

- C . Use the AWS Deep Learning AMI with Gluon Semantic Segmentation on the transcribed files to

train and build a model for the key topics - D . Use the Amazon SageMaker k-Nearest-Neighbors (kNN) algorithm on the transcribed files to generate a word embeddings dictionary for the key topics

Latest MLS-C01 Dumps Valid Version with 104 Q&As

Latest And Valid Q&A | Instant Download | Once Fail, Full Refund