Amazon DOP-C01 AWS DevOps Engineer – Professional Online Training

Amazon DOP-C01 Online Training

The questions for DOP-C01 were last updated at Mar 03,2026.

- Exam Code: DOP-C01

- Exam Name: AWS DevOps Engineer - Professional

- Certification Provider: Amazon

- Latest update: Mar 03,2026

A company requires its internal business teams to launch resources through pre-approved AWS CloudFormation templates only. The security team requires automated monitoring when resources drift from their expected state.

Which strategy should be used to meet these requirements?

- A . Allow users to deploy Cloud Formation stacks using a CloudFormation service role only. Use CloudFormation drift detection to detect when resources have drifted from their expected state.

- B . Allow users to deploy CloudFormation stacks using a CloudFormation service role only. Use AWS Config rules to detect when resources have drifted from their expected state.

- C . Allow users to deploy CloudFormation stacks using AWS Service Catalog only Enforce the use of a launch constraint Use AWS Config rules to detect when resources have drifted from their expected state.

- D . Allow users to deploy CloudFormation stacks using AWS Service Catalog only Enforce the use of a template constraint Use Amazon EventBridge (Amazon CloudWatch Events) notifications to detect when resources have drifted from their expected state.

A legacy web application stores access logs in a proprietary text format. One of the security requirements is to search application access events and correlate them with access data from many different systems. These searches should be near-real time.

Which solution offloads the processing load on the application server and provides a mechanism to search the data in near-real time?

- A . Install the Amazon CloudWatch Logs agent on the application server and use CloudWatch Events rules to search logs for access events. Use Amazon CloudSearch as an interface to search for events.

- B . Use the third-party file-input plugin Logstash to monitor the application log file, then use a custom dissect filter on the agent to parse the log entries into the JSON format. Output the events to Amazon ES to be searched. Use the Elasticsearch API for querying the data.

- C . Upload the log files to Amazon S3 by using the S3 sync command. Use Amazon Athena to define the structure of the data as a table, with Athena SQL queries to search for access events.

- D . Install the Amazon Kinesis Agent on the application server, configure it to monitor the log files, and send it to a Kinesis stream. Configure Kinesis to transform the data by using an AWS Lambda function, and forward events to Amazon ES for analysis. Use the Elasticsearch API for querying the data.

A DevOps engineer is tasked with moving a mission-critical business application running in Go to AWS. The development team running this application is understaffed and requires a solution that allows the team to focus on application development. They also want to enable blue/green deployments and perform A/B testing.

Which solution will meet these requirements?

- A . Deploy the application on an Amazon EC2 instance and create an AMI of this instance. Use this AMI to create an automatic scaling launch configuration that is used in an Auto Scaling group. Use an Elastic Load Balancer to distribute traffic. When changes are made to the application, a new AMI is created and replaces the launch configuration.

- B . Use Amazon Lightsail to deploy the application. Store the application in a zipped format in an Amazon S3 bucket Use this zipped version to deploy new versions of the application to Lightsail. Use Lightsail deployment options to manage the deployment.

- C . Use AWS CodePipeline with AWS CodeDeploy to deploy the application to a fleet of Amazon EC2 instances. Use an Elastic Load Balancer to distribute the traffic to the EC2 instances. When making changes to the application, upload a new version to CodePipeline and let it deploy the new version.

- D . Use AWS Elastic Beanstalk to host the application. Store a zipped version of the application in Amazon S3, and use that location to deploy new versions of the application using Elastic Beanstalk to manage the deployment options.

A DevOps engineer must ensure all IAM entity configurations across multiple AWS accounts in AWS Organizations are compliant with corporate IAM policies.

Which combination of steps will accomplish this? (Select TWO.)

- A . Enable AWS Trusted Advisor in Organizations for all accounts to report on noncompliant IAM entities.

- B . Configure an AWS Config aggregator in the Organizations master account for all accounts

- C . Deploy AWS Config rules to the master account in Organizations that match corporate IAM policies.

- D . Apply an SCP in Organizations to ensure compliance of IAM entities.

- E . Deploy AWS Config rules to all accounts in Organizations that match the corporate IAM policies.

A devops team uses AWS CloudFormation to build their infrastructure. The security team is concerned about sensitive parameters, such as passwords, being exposed.

Which combination of steps will enhance the security of AWS CloudFormation? (Select THREE.)

- A . Create a secure string with AWS KMS and choose a KMS encryption key. Reference the ARN of the secure string, and give AWS CloudFormation permission to the KMS key for decryption.

- B . Create secrets using the AWS Secrets Manager AWS::SecretsManager::Secret resource type. Reference the secret resource return attributes in resources that need a password, such as an Amazon RDS database.

- C . Store sensitive static data as secure strings in the AWS Systems Manager Parameter Store. Use dynamic references in the resources that need access to the data.

- D . Store sensitive static data in the AWS Systems Manager Parameter Store as strings.

Reference the stored value using types of Systems Manager parameters. - E . Use AWS KMS to encrypt the CloudFormation template.

- F . Use the CloudFormation NoEcho parameter property to mask the parameter value.

A company is migrating an application to AWS that runs on a single Amazon EC2 instance. Because of licensing limitations, the application does not support horizontal scaling. The application will be using Amazon Aurora for its database.

How can the DevOps Engineer architect automated healing to automatically recover from EC2 and Aurora failures, in addition to recovering across Availability Zones (AZs), in the MOST cost-effective manner?

- A . Create an EC2 Auto Scaling group with a minimum and maximum instance count of 1, and have it span across AZs. Use a single-node Aurora instance.

- B . Create an EC2 instance and enable instance recovery. Create an Aurora database with a read replica in a second AZ, and promote it to a primary database instance if the primary database instance fails.

- C . Create an Amazon CloudWatch Events rule to trigger an AWS Lambda function to start a new EC2 instance in an available AZ when the instance status reaches a failure state. Create an Aurora database with a read replica in a second AZ, and promote it to a primary database instance when the primary database instance fails.

- D . Assign an Elastic IP address on the instance. Create a second EC2 instance in a second AZ. Create an Amazon CloudWatch Events rule to trigger an AWS Lambda function to move the Elastic IP address to the second instance when the first instance fails. Use a single-node Aurora instance.

A company has migrated its container-based applications to Amazon EKS and want to establish automated email notifications. The notifications sent to each email address are for specific activities related to EXS components. The solution will include Amazon SNS topics and an AWS Lambda function to evaluate incoming log events and publish messages to the correct SNS topic.

Which logging solution will support these requirements?

- A . Enable Amazon CloudWatch Logs to log the EKS components. Create a CloudWatch subscription filter for each component with Lambda as the subscription feed destination.

- B . Enable Amazon CloudWatch Logs to log the EKS components. Create CloudWatch Logs Insights queries linked to Amazon CloudWatch Events events that trigger Lambda.

- C . Enable Amazon S3 logging for the EKS components. Configure an Amazon CloudWatch subscription filter for each component with Lambda as the subscription feed destination.

- D . Enable Amazon S3 logging for the EKS components. Configure S3 PUT Object event notifications with AWS Lambda as the destination.

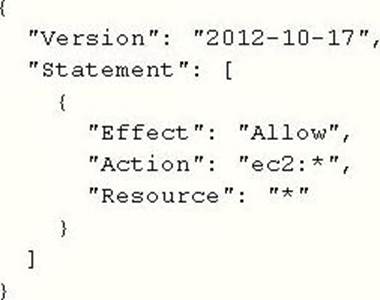

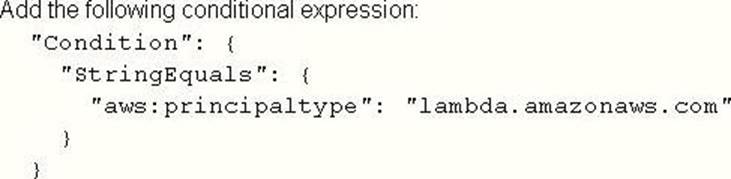

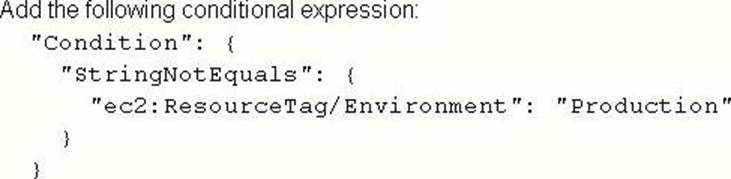

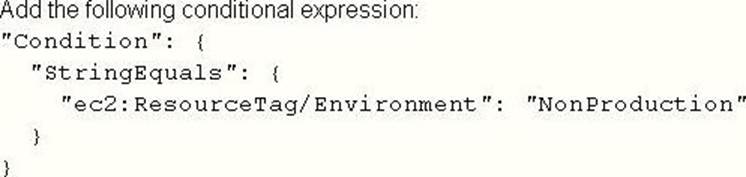

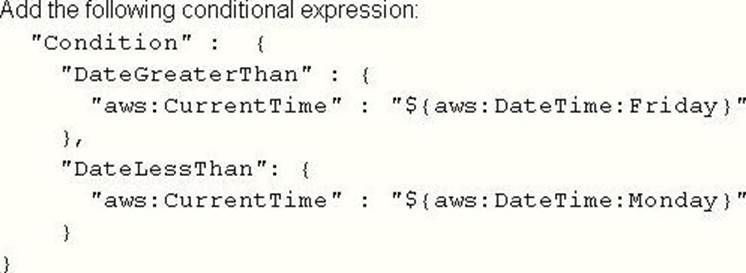

A company is reviewing its IAM policies. One policy written by the DevOps Engineer has been flagged as too permissive. The policy is used by an AWS Lambda function that issues a stop command to Amazon EC2 instances tagged with Environment: Nonproduction over the weekend.

The current policy is:

What changes should the Engineer make to achieve a policy of least permission? (Select THREE.)

A)

B)

![]()

C)

D)

E)

![]()

F)

- A . Option A

- B . Option B

- C . Option C

- D . Option D

- E . Option E

- F . Option F

You have an ELB setup in AWS with EC2 instances running behind it. You have been requested to monitor the incoming connections to the ELB.

Which of the below options can suffice this requirement?

- A . UseAWSCIoudTrail with your load balancer

- B . Enable access logs on the load balancer

- C . Use a CloudWatch Logs Agent

- D . Create a custom metric CloudWatch filter on your load balancer

A DevOps engineer is deploying a new version of a company’s application in an AWS CodeDeploy deployment group associated with its Amazon EC2 instances. After some time, the deployment fails. The engineer realizes that all the events associated with the specific deployment ID are in a Skipped status, and code was not deployed in the instances associated with the deployment group.

What are valid reasons for this failure? (Select TWO.)

- A . The networking configuration does not allow the EC2 instances to reach the internet via a NAT gateway or internet gateway, and the CodeDeploy endpoint cannot be reached.

- B . The IAM user who triggered the application deployment does not have permission to interact with the CodeDeploy endpoint.

- C . The target EC2 instances were not properly registered with the CodeDeploy endpoint.

- D . An instance profile with proper permissions was not attached to the target EC2 instances.

- E . The appspec.yrnl file was not included in the application revision.

Latest DOP-C01 Dumps Valid Version with 188 Q&As

Latest And Valid Q&A | Instant Download | Once Fail, Full Refund

ALmost 80% of questions have wrong answers marked and good enough to fail